Contextual Display

US Patent Application US20190199935A1, filed by Elliptic Laboratories AS

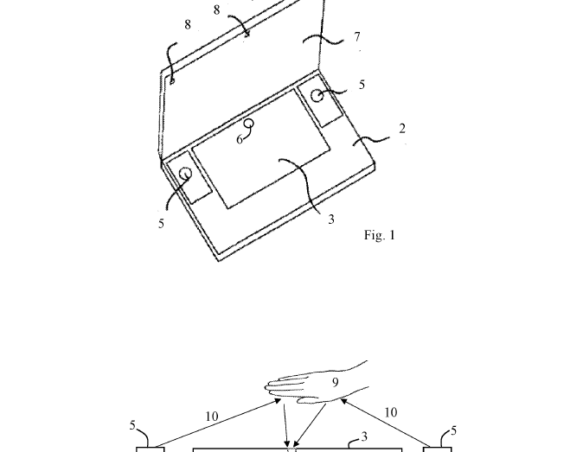

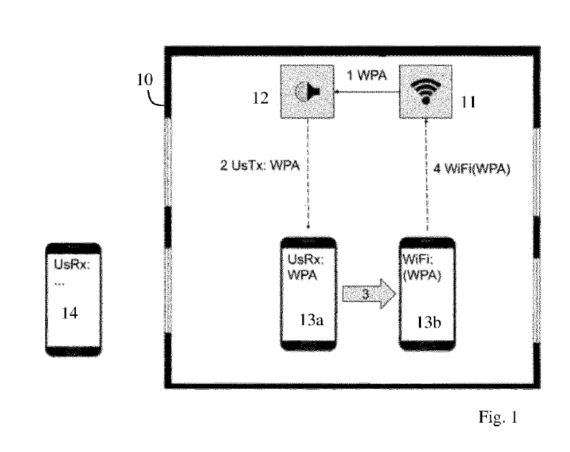

This invention enables a smartphone or similar device to adapt its screen content based on how far the user is from the screen—without needing to touch it. By combining ultrasound distance and motion sensing with facial recognition, the system automatically changes what is shown depending on context.

For example, when holding the phone at arm’s length, it might show a full camera view. When moved closer to the face, the screen could automatically zoom in or switch to image review mode. This enables intuitive, one-handed operation for tasks like taking selfies or reviewing photos, even in situations where using touch controls is impractical.

The innovation lies in using ultrasonic signals to measure distance, making the system more reliable and responsive than previous methods relying only on cameras or touch. The display can switch between different modes—or even animate smoothly between them—based on how close or far the device is from the user.