Touchless User Interfaces – Navigation Building Blocks

WO2013132242A1

Filed by Elliptic Laboratories AS

This invention defines a novel interaction paradigm where 3D hand gestures, detected via ultrasonic sensing, control user interface elements on a 2D screen, without touch. It introduces key principles for structuring and interpreting spatial gestures, enabling a responsive and layered user experience beyond traditional touchscreen interaction.

1. Spatially-Aware Gesture Zones

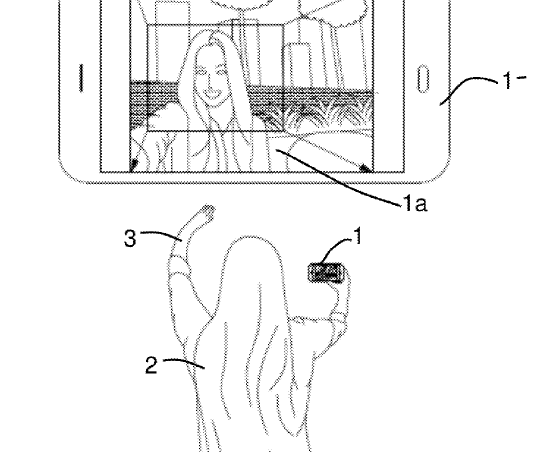

The screen is logically partitioned into distinct regions, each capable of responding differently to a gesture based on the hand’s position relative to the device. For example, a swipe gesture performed over the screen’s edge may trigger a different function than the same gesture in the center. This spatial context mimics and extends the gesture grammar found in modern touch interfaces, but without physical contact.

2. Contextual Visual Feedback for Gesture Readiness

The system provides subtle visual cues, such as retracting or layering the screen UI in the depth axis, when a hand is detected nearby. This visual feedback signals to the user that the system is aware of their presence and ready for interaction. The screen’s reaction invites exploration, gently guiding the user into the gesture-based interaction model.

3. Selecting and Navigating 2D Menus with 3D Gestures

The patent outlines methods for navigating traditional two-dimensional menu structures using spatial gestures. By analyzing both the position and direction of a hand’s movement in three-dimensional space, users can highlight, scroll, and select options within a flat menu layout, with no screen contact.

4. Custom 3D UI Elements for Gesture Interaction

To complement the 3D input method, the system supports dynamic UI elements specifically designed for gesture input. One example is a rotating radial menu, which affords circular or sweeping gestures to cycle through options. These UI elements are inherently spatial and designed to match the expressiveness of the input method.

5. Gesture-Dependent Functionality Based on Position and Type

Beyond location, the system distinguishes between gesture types—such as swipe, push, hold, or a circular motion and can map them to different commands depending on where on the screen they occur. This allows a single gesture type to produce multiple outcomes based on spatial intent, increasing the expressive power of the interface without increasing visual complexity.

In essence, this patent lays the groundwork for a highly responsive and spatially expressive gesture interface. It brings the screen to life, sensitive to proximity, gesture type, and position, allowing rich interaction in mid-air. Rather than mimicking touch, it evolves it, offering a broader vocabulary of human input while maintaining clear visual feedback and interaction structure.